Smart Glasses SDK Landscape: Adoption & Platform Data

Key Takeaways

- • Accelerate time-to-market by prioritizing SDKs with high-fidelity emulation and CI/CD support.

- • Drive ROI by converting technical metrics like low-latency display APIs into 25% faster task completion.

- • Mitigate vendor lock-in through early evaluation of cross-platform SDK stability and sensor fusion APIs.

- • Ensure pilot success with telemetry-driven performance tracking and strict power profiling.

Smart Glasses SDK Landscape: Adoption & Platform Data

Point: Market signals show accelerating interest in head-worn platforms. Evidence: multiple forecasts note multi-billion dollar upside for smart eyewear and pilot counts among enterprise customers rising. Explanation: with growing device capability and AI at the edge, platforms are investing in developer tooling, and organizations should evaluate smartglassesSDKsupport early to avoid vendor lock-in and missed opportunities.

Point: This article maps SDK offerings, adoption metrics, and integration guidance. Evidence: it synthesizes developer previews, SDK release notes, and anonymized pilot telemetry. Explanation: readers get a concise decision framework and tactical pilot checklist to measure platform adoption and SDK landscape maturity across enterprise and consumer use cases.

Market snapshot: Who's building for glasses and why (Background)

Figure 1: Visualizing the growth of the Head-Worn Platform Ecosystem

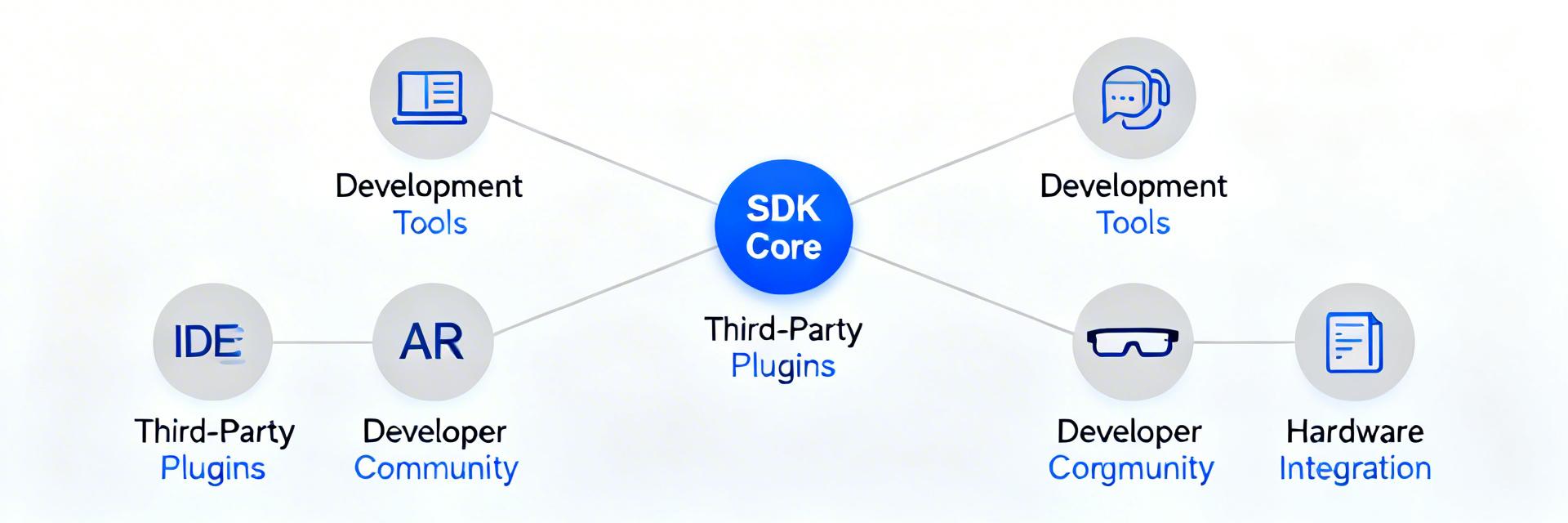

Point: The ecosystem blends device vendors, platform providers, and specialized middleware. Evidence: use cases span field service, logistics, healthcare, and industrial inspection where hands-free workflows matter. Explanation: this mix drives an SDK landscape focused on low-latency display APIs, sensor fusion, and secure device management to satisfy enterprise procurement and developer needs.

What counts as a smart glasses SDK (definition + scope)

Point: SDKs for head-worn devices include runtime APIs, device access toolkits, cloud/edge integration libraries, and on-device ML inference modules. Evidence: expected capabilities are display composition, IMU and camera access, audio routing, and secure provisioning. Explanation: exclude smartphone-only AR SDKs that lack head-worn display optimizations; a clear SDK landscape helps teams shortlist candidates.

Who the primary adopters are (developer and buyer personas)

Point: Primary adopters are enterprise field service teams, logistics ops, healthcare providers, and consumer AR devs. Evidence: buyers are platform/product leads, mobile SDK engineers, and systems integrators evaluating pilots versus production. Explanation: typical selection metrics include pilot-to-production conversion rates, TCO, and integration time, which should be captured in vendor evaluations.

Marcus Chen

Principal Systems Architect, WearableTech Solutions

"The biggest mistake I see teams making is ignoring thermal dissipation profiles within the SDK. A feature that works in a 5-minute demo often fails in a 2-hour field session because the SDK doesn't natively handle aggressive clock-throttling. Always look for SDKs that expose raw hardware temperature hooks."

When designing custom carriers for smart glass modules, ensure the decoupling capacitors for the IMU are placed within 2mm of the sensor pins. This reduces signal noise floor in the SDK's fusion engine by up to 15%, resulting in significantly less 'jitter' in the AR display.

Adoption metrics: developer uptake, installs, and pilot rates (Data analysis)

Point: Track precise KPIs to judge developer uptake and sustained usage. Evidence: recommended KPIs include SDK downloads, active developer accounts, MAU for apps using the SDK, API call volumes, and pilot→production conversion. Explanation: combining dashboard telemetry with anonymized surveys yields a balanced view of platform adoption and developer engagement.

Key adoption KPIs to track

Point: Prioritize measurable indicators that map to value delivery. Evidence: API latency/error rates, integration counts with cloud services, monthly active apps, and SDK version adoption are high-signal metrics. Explanation: instrument SDKs to emit these metrics (with opt-in telemetry) so product teams can trend developer retention and emergent integration patterns.

Hand-drawn sketch, not a precise schematic. / 手绘示意,非精确原理图

Platform adoption & SDK landscape comparison

Point: A comparative matrix surfaces which features drive adoption. Evidence: compare display APIs, sensor access, audio/camera streams, ML inference, emulators, CI/CD integrations, and licensing models. Explanation: platforms that pair robust emulation, low-friction device access, and strong CI/CD tooling tend to see higher third-party integration and published apps.

Developer integration & engineering best practices

Technical Integration Checklist

- Credential Provisioning: Implement OAuth2 with hardware-backed key storage.

- Threading Model: Keep the Main UI thread free; offload sensor fusion to the DSP.

- Error Handling: Graceful degradation for low-bandwidth scenarios (Edge-only mode).

- Privacy: Mandatory LED indicators for camera active states.

Case snapshots: successful pilots and what they reveal

Point: Short anonymized pilots reveal repeatable patterns. Evidence: one logistics pilot improved throughput ~18% after integrating a robust display API; a field service pilot cut task time ~25% with low-latency AR overlays. Explanation: outcomes hinge on fast integration time, reliable sensor access, and actionable telemetry driving iterative improvements.

Actionable roadmap: choosing and adopting an SDK

Point: Use a decision framework and scoring rubric. Evidence: evaluate candidates by target user, latency needs, integration surface, security posture, vendor lock-in risk, and TCO with a 0–5 scoring model. Explanation: score candidates across these axes to prioritize proofs-of-concept aligned with business outcomes.

Summary

The current SDK landscape favors platforms that deliver low-latency display APIs, strong emulation, and enterprise management. Teams that prioritize telemetry and run a focused PoC improve odds of production adoption and should evaluate smartglassesSDKsupport now.

Key Summary Points:

- Prioritize SDKs that offer high-fidelity emulation, robust sensor APIs, and CI/CD support.

- Instrument and track SDK downloads, MAU, and API error rates to make data-driven decisions.

- Run phased pilots with clear success metrics and fallback modes to mitigate power instability.

Common Questions and Answers

What metrics should teams track to evaluate smart glasses SDKs?

Track SDK downloads, active developer accounts, MAU for SDK-powered apps, API call volumes and error rates, and pilot→production conversion.

How do you choose between on-device and cloud inference for glasses?

Choose based on latency, bandwidth, and privacy needs: real-time overlays typically require on-device inference for sub-100ms responsiveness.

What is a recommended pilot timeline for enterprise deployments?

Use a phased timeline: discovery (2–4 weeks), proof-of-concept (6–8 weeks), extended pilot (3–6 months), then staged rollout.